Thumbs up to creators of algoexpert.io

First of all – I’m not a shill but just an ordinary satisfied user of the service. Wonder if all stoogy sponsored testimonial starts like this ;-)

Anyway, recently, I’ve been trying to improve my coding skills and found that just doing exercises on leetcode.com was not enough for me. I was definitely missing some bigger picture and had unstructured way of thinking.

Luckily for me I came across algoexpert.io that, to be honest, hasn’t solved all my problems and hasn’t yet helped to land my dream job but nevertheless has greatly improved my overall apprehension and allowed to improve self-confidence. What attracts me the most is that with algoexpert you don’t only have the questions and the answers but also the concise and at the same time highly details conceptual explanation of every problem. That helps to build fundamental knowledge which is essential for the coding interview.

I’d highly encourage to check out some free questions on their website to see it for yourself.

Good luck!

Checkout a single file from Git repo

This one going to be quick and putting here just for future reference.

Task

Checkout a single file from Git without cloning whole repo

Solution

git archive --prefix=config/ --remote=git@git.example.com:/app.git HEAD:app/config/ config.yaml | (cd /usr/local/app && tar xf -)

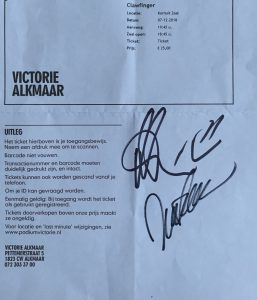

Clawfinger memories 2018

This is from 25 years DDB show in Alkmaar, Netherlands.

ROP interactive guide for beginners by Vetle Økland

Just yesterday came on a post on reddit that pointed me to an amazing “Interactive Beginner’s Guide to ROP”. It’s nicely worded and has a couple of puzzles which I, as a total newbie, found very exciting. Leaving the solutions below for a later me…

\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x20\x00\x00\x00\x00\x00\x00\x00\x55\x00\x00\x00\x00\x00\x00\x00\x39\x00\x00\x00\x00\x00\x00\x00\x0e

\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x00\x20\x00\x00\x00\x00\x00\x00\x00\x55\x00\x00\x00\x00\x00\x00\x00\x39\x00\x00\x00\x00\x00\x00\x00\x0e

Where GREP came from – Brian Kernighan

In: Apple, FreeBSD, HP-UX, Linux, Solaris

When the past meets the future

The subject says it all.

Upgrading to MySQL 8? Think of the default authentication plugin

As explained in a post at mysqlserverteam.com the default authentication plugin has been changed from mysql_native_password to caching_sha2_password. And that would certainly break all PHP-based applications because at the time of writing PHP doesn’t support caching_sha2_password. Please, keep an eye on the related request #76243. Once it’s implemented it would be possible to switch to caching_sha2_password but till then use “default_authentication_plugin = mysql_native_password” in your my.cnf file or start mysqld with –default-authentication-plugin= mysql_native_password.

“WT_CONNECTION.open_session: only configured to support 20020”

Frankly speaking, the explanation provided in SERVER-30421 and SERVER-17364 is a bit vague and “hand wavy” to me but at least there are steps that could help mitigate it:

- Decrease idle cursor timeout (default value is 10 minutes):

In mongodb.conf:setParameter: cursorTimeoutMillis: 30000

Using mongo cli:

use admin db.runCommand({setParameter:1, cursorTimeoutMillis: 30000}) - Increase session_max:

storage: wiredTiger: engineConfig: configString: "session_max=40000"

Changing Oplog size or when root role is not enough

Managing MongoDB sometimes involves increasing Oplog size sine the default setting (5% of free disk space if running wiredTiger on a 64-bit platform) is not enough. And if you’re running MongoDB older than 3.6 that requires some manual intervention described in the documentation. It’s pretty straightforward even if it requires a node downtime as part of the rolling maintenance operation. But what is more important is that the paper glosses over the fact that to be able to create a new oplog just having “root role” is not enough.

> db.runCommand({ create: "oplog.rs", capped: true, size: (32 * 1024 * 1024 * 1024) })

{

"ok" : 0,

"errmsg" : "not authorized on local to execute command { create: \"oplog.rs\", capped: true, size: (32 * 1024 * 1024 * 1024) }",

"code" : 13

}

Granting an additional “readWrite” role on “local” db fixes the problem:

db.grantRolesToUser("admin", [{role: "readWrite", db: "local"}])

As stated in SERVER-28449 that has been done intentionally:

This intentional and is due to a separation of privileges. The root role is a super-set of permissions affecting user data specifically, not system data, therefore the permissions must be explicitly granted to perform operations on local.

So, please, keep that in mind and don’t flip out =)

Yandex internal CTF 2017

This year CTF at Yandex brought not only the excitement and sleepless nights but a bunch of awesome swag.

In: Life, Linux, Security